About me

I am a second-year PhD student at Stanford University, co-advised by Prof. Leonidas Guibas and Prof. Gordon Wetzstein. My research is generously supported by the Qualcomm Innovation Fellowship.

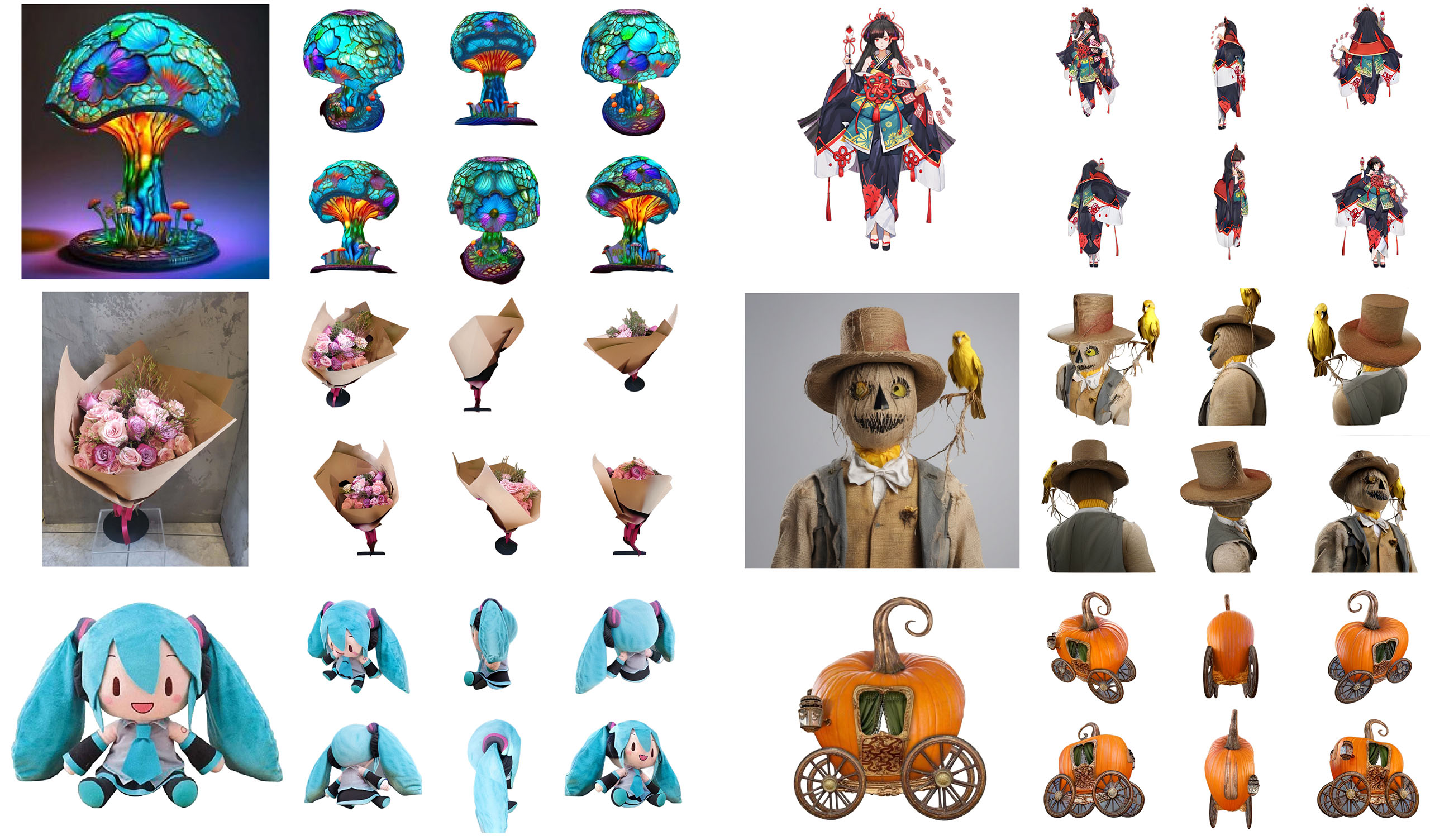

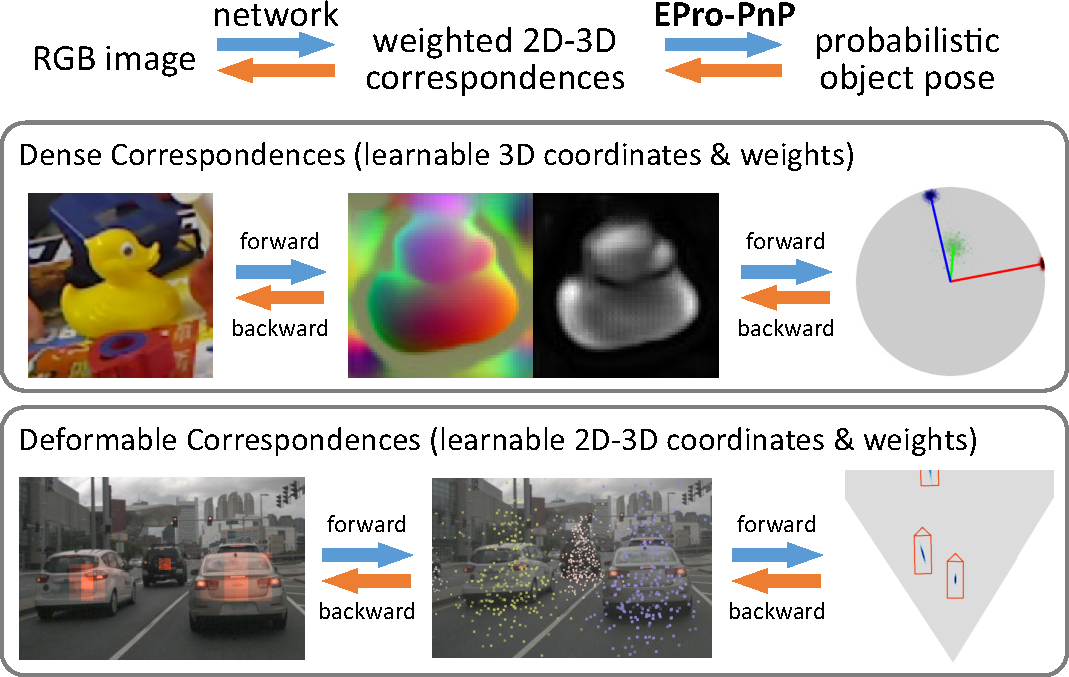

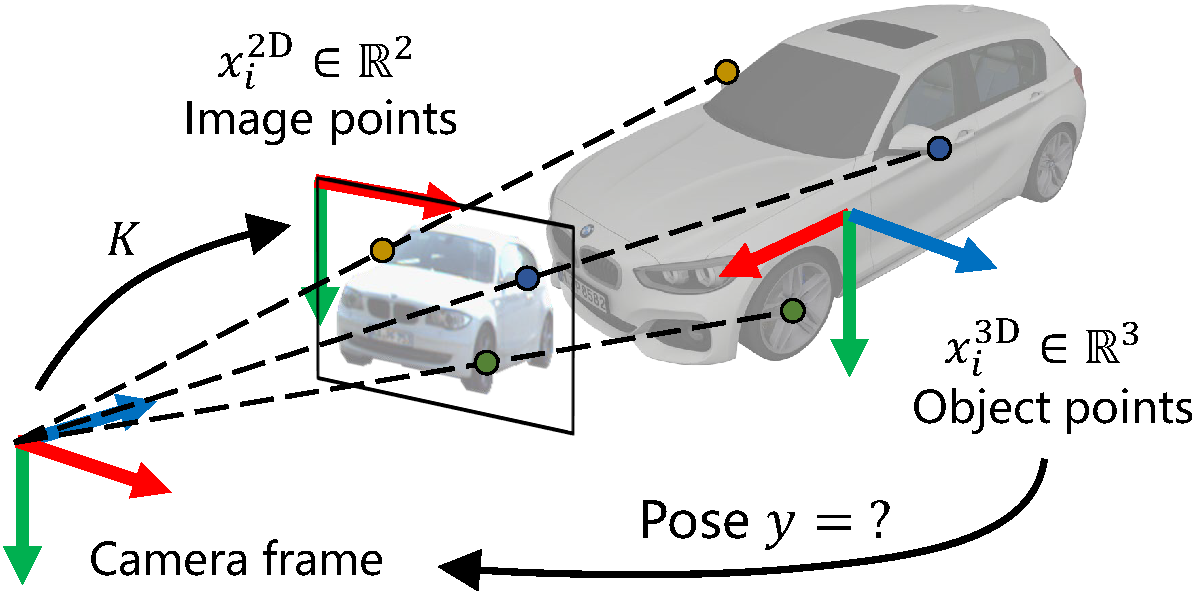

I am passionate about generative models and their applications in vision and graphics, with a current focus on diffusion models and 3D generation. Previously, I worked on image-based 6DoF pose estimation, and my work EPro-PnP was awarded the CVPR 2022 Best Student Paper.